Video Player is loading.

This is a modal window.

The media could not be loaded, either because the server or network failed or because the format is not supported.

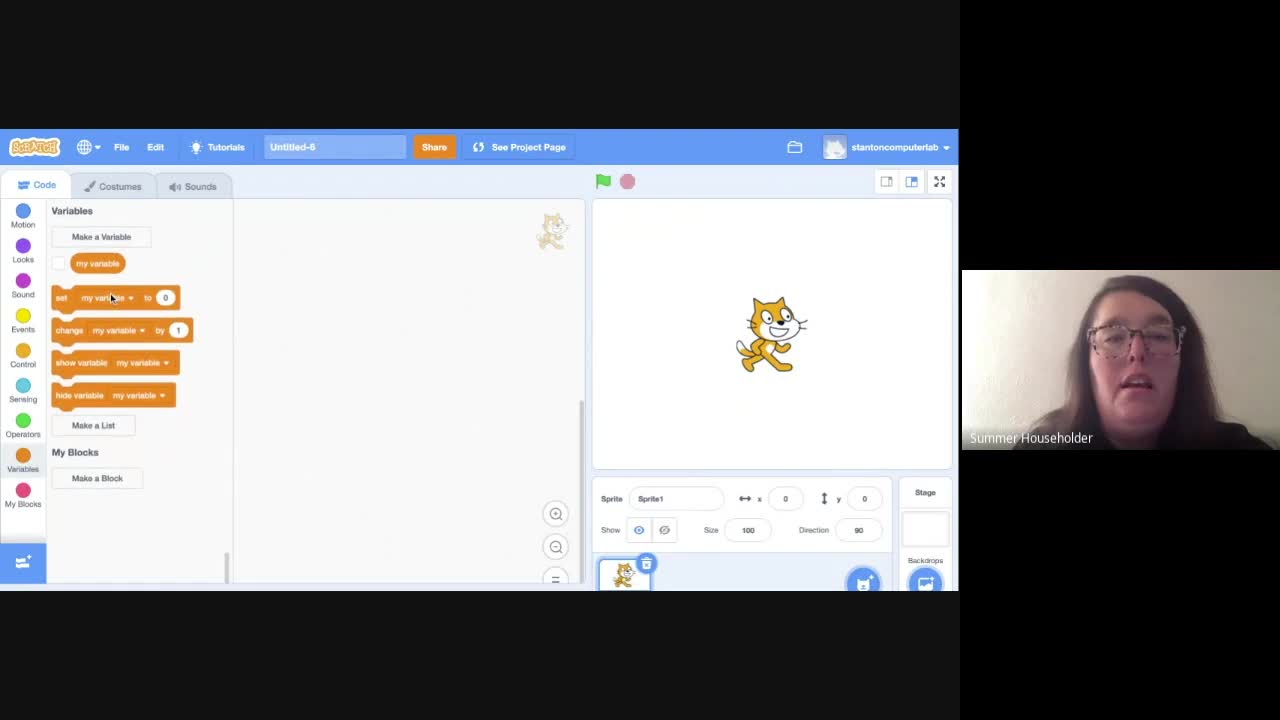

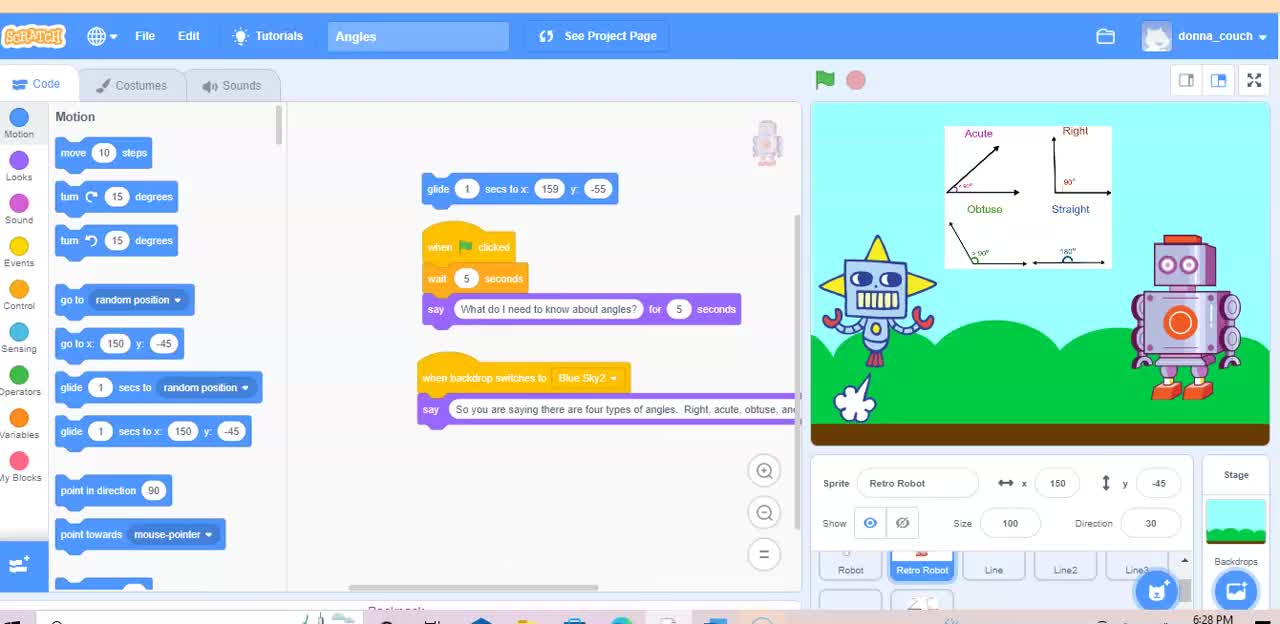

Tokenization & Lexical Analysis

College and University / Computer Science / Technology In The Classroom

Loading (deserializing) structured input data into computer memory as an implicit chain of tokens in order to prepare subsequent processing, syntactical/semantical analysis, conversion, parsing, translation or execution.